Oct 31, 2018 — I can do this by using the command in Hadoop: hadoop fs -ls hdfs://sandbox.hortonworks.com/demo/ I ... or Spark. Can someone tell me how to ...

In order to use Spark, you need to have a home directory on HDFS. ... all files and directories have 1) an owner:group, and 2) read/write/execute permissions for ...

spark read hdfs directory

spark read hdfs directory, spark read hdfs directory java, spark read data from hdfs directory, spark scala read hdfs directory Pool time, Screenshot (10954) @iMGSRC.RU

This section contains information on running Spark jobs over HDFS data. Specifying Compression. To add a compression library to Spark, you can use the --jars .... Apr 4, 2020 — Read writing from somanath sankaran on Medium. Big Data Developer interested in python and spark. Every day, somanath… medium.com.. If a directory contains multiple files then you can read all files in a single statement. val rdd = sc.textFile(“hdfs:/tmp/directory_name”). Thank you!! School girl bikini 4 - candid ass, IMG_20190919_011427 @iMGSRC.RU

spark read data from hdfs directory

“spark read hdfs directory recursive” Code Answer. spark read hdfs directory recursive. whatever by Index out of bounds on Sep 24 2020 Donate Comment. 0.. In Hadoop 1.4, we are not provided with listFiles method so we use listStatus to get directories. It doesn't have recursive option but it is easy to .... xml file under Hadoop configuration folder. On this file look for fs.defaultFS property and pick the value from this property. for example, you will have the value in .... In addition, if you wish to access an HDFS cluster, you need to add a ... wholeTextFiles lets you read a directory containing multiple small text files, and returns .... This section contains information on running Spark jobs over HDFS data. Specifying Compression. To add a compression library to Spark, you can use the --jars ... ben_10_alien_force_tamil_dubbed

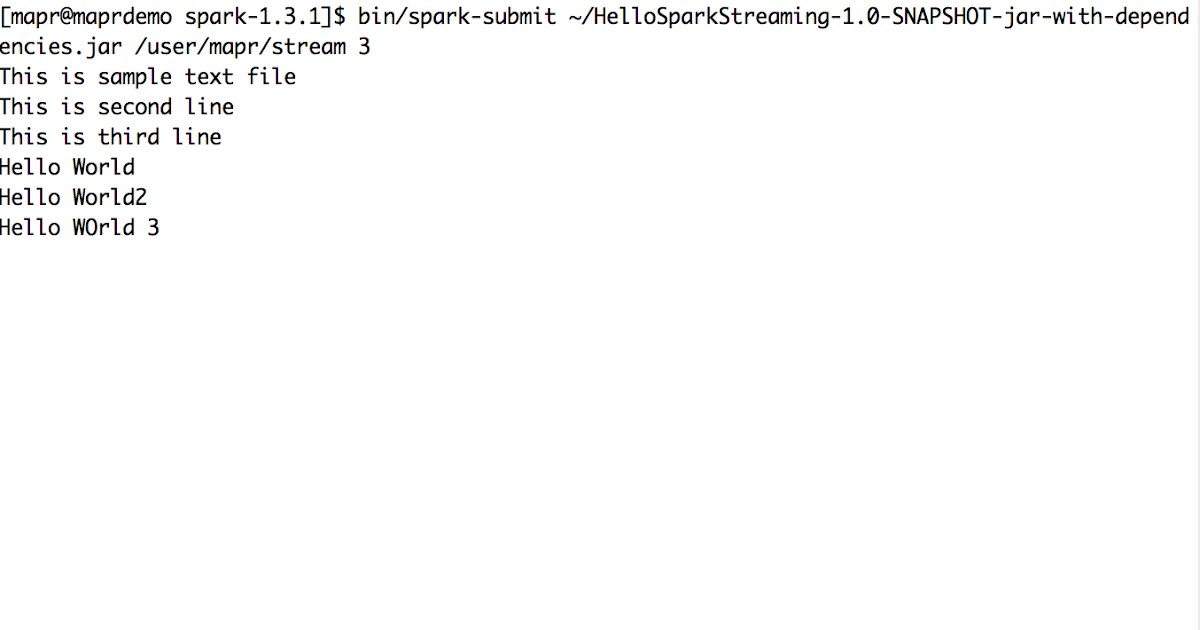

I wanted to build this simple Spark Streaming application that monitors a particular directory in HDFS and whenever a new file shows up, i want to print its .... I am unable to read multiple files from a directory in Spark Streaming. I have been using val tweets=ssc.fileStream("hdfs://localhost:8020/user/hdfs/sparkinput/"). Oct 29, 2018 — Using PySpark hadoop = sc._jvm.org.apache.hadoop fs = hadoop.fs.FileSystem conf = hadoop.conf.Configuration() path = hadoop.fs.. getOrCreate(). Read in HDFS. To read using SparkSession : %pyspark df_load = sparkSession.read.csv('/hdfs/full/path/to/folder') df_load.show(). Write to HDFS.. You can use org.apache.hadoop.fs.FileSystem . Specifically, FileSystem.listFiles([path], true). And with Spark... FileSystem.get(sc.. Feb 10, 2019 — get(spark.sparkContext.hadoopConfiguration) // Delete directories recursively using FileSystem class fs.delete(new Path("/bigdata_etl/data"), true) .... toString val data = sparkSession.read.parquet(dataPath) val Row(idf: Vector) ... non-existent directory to test whether log make the dir val metadataLog = new .... In my previous post, I demonstrated how to write and read parquet files in Spark/Scala. The parquet file destination is a local folder. Write and Read Parquet .... tags: scala List files in the hdfs directory hdfs spark. @ - just to live better. Scala lists files in the hdfs directory. In a recent business, you need to list all the files in a directory on hdfs, filter out the ... Java API to read all the files in HDFS directory. dc39a6609b Chubby model, Default4 @iMGSRC.RU